Weekly Wonk: Weighing Tensions & Tradeoffs in Evidence-Based Policy

What works, who pays, & why it matters

From the Founder’s Desk

Evidence plays an essential load-bearing role in child and family policy.

That doesn’t mean the story around that role is simple.

Knowing what works matters for promoting optimal outcomes, avoiding harm, and efficiently and effectively allocating limited resources.

Evidence gives granular understanding of not just whether something works but how, why, for whom, and under what circumstances. It drives deliberation forward.

Last week’s Wonkcast episode with Mike Shaver unpacked his organization Brightpoint’s role studying whether and in what circumstances cash assistance works.

The tensions and trade-offs emerge when considering how to structurally incorporate evidence into the foundational design of public policy.

Evidentiary standards in policy can create an essential sorting mechanism to ensure federal resources focus on proven approaches.

They can also create a reinforcement loop; rewarding scale and capitalization, key requirements for building robust evidence, with more financing and scaling support.

Take the Family First Prevention Services Act.

The law uses rigorous standards to determine what federal financing will pay for to keep children safely at home and out of foster care.

Its design was structured to create incentives for developing and leveraging evidence. But implementation suggests it created a bottleneck for deployment of interventions.

In this week’s Deep Dive, Kimberly Martin examines that tension, unpacking the well-intentioned ways policy design can unbalance evidence-based policy into a barrier.

We also preview last week’s premium Wonk Briefing Room piece by Laura Radel and Brett Greenfield, showing the multiple drivers behind declining foster care nationally.

Let’s get into it.

Special thanks to Binti for their foundational sponsorship of WonkCast.

From the Wonk Briefing Room

The foster care decline is a headline easy to oversimplify.

As we showed in Part I of this series, the number of children in foster care is down more than 20 percent from its recent peak.

In this week’s premium Decoded brief, Laura Radel and Brett Greenfield ask a sharper question: are the declines happening across the country actually the same story?

This is Part II of our Decoded series on declining foster care rates. The final part will be published later this month.

Decoded: Mapping the Foster Care Decline

By Laura Radel, Senior Contributor, and Brett Greenfield

Last month, we examined the national decline in foster care caseloads, down 23 percent from the most recent peak in 2018.

But those declines are not self-explanatory.

They can signal prevention working, system capacity tightening, screening thresholds shifting, pandemic-era distortions – or some combination of all four.

Understanding what’s driving them matters for decision-makers.

In this piece, we look under the hood.

Rather than treating the national trend as a single story, we examine the eight states with the largest numerical declines in foster care between 2018 and 2023.

Together, these states account for 60 percent of the national decline. If there is a structural shift underway, it shows up here first.

Not One Decline, But Several

Across the 8 states driving most of the national foster care decline, the topline numbers move in the same direction. The underlying dynamics do not.

Each state experienced reductions in both the rate of foster care entries and the rate of children in care overall. But the relationship between those two trends varies in ways that matter.

Those variations cluster into patterns that point to different driving forces of the overall decline.

Across the Board Declines

In Indiana, Oregon, and Pennsylvania declines in entry rates closely tracked declines in the overall foster care population.

This pattern reflects relatively stable system dynamics as caseloads fall; not only did entries decline but that tracked with a corresponding rise in exits.

To read the rest of this premium brief with charts and tables, and get all our others, sign up for the Wonk Briefing Room here.

Weekly Wonk Deep Dive

Decoded: The Catch in “Evidence-Based”

By Kimberly J. Martin, JD

Evidence-based policymaking in human services sounds like common sense.

But in child welfare and other public systems, this label has become less gold standard and more gatekeeper to public dollars.

The term doesn’t always signal effectiveness.

It often reflects the extent of funding behind an intervention — the ability to afford the years of formal evaluation required to be listed in a federal clearinghouse.

That price tag can shut out community-rooted solutions, especially those created by and for marginalized families.

This piece examines how our over-reliance on evidence-based interventions narrows what gets funded, who gets to provide services, and whose knowledge counts as valid.

Only What’s Measurable Gets Funded

Evidence standards were designed to prevent ineffective or harmful interventions from receiving public dollars.

The question isn’t whether we need accountability. It’s whether our current standards are securing it, and whether they’re the only path to it.

Programs earn evidence-based status because they can afford to undergo years of formal evaluation, often through randomized control trials or other high-cost studies.

The result?

Public dollars flow to what can be measured, not necessarily to what communities trust, use, or value.

Black-led healing circles, tribal kinship networks, rural peer-support models, and community-rooted mentorship rarely appear in federal clearinghouses.

But that absence isn’t proof of ineffectiveness; it’s a structural policy design to which those approaches aren’t legible

These models are often unevaluated, not because they’ve failed, but because the existing structural incentives don’t make their evaluation inevitable.

When the evidence base is limited to what’s been resourced, we mistake absence of evidence for evidence of absence.

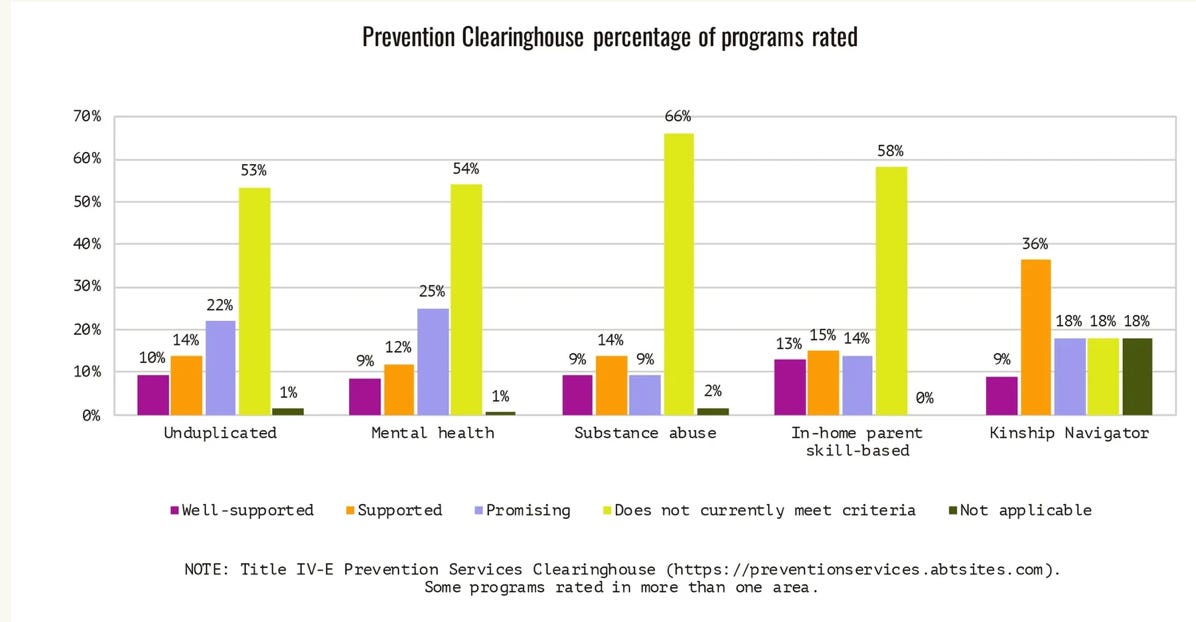

As of July 2025, of 210 programs reviewed by the Title IV-E Prevention Services Clearinghouse, only 95 met the threshold to be rated as “well-supported,” “supported,” or “promising,” leaving 115 either unrated or judged to not currently meet criteria.

And of those 95, the most important number to look at is those in the highest “well-supported” category.

Because the law requires half of a state’s spending to be in that top category, most agencies understandably focus only on those top-rated programs.

Translation: dozens of promising interventions - especially those created by and for historically excluded communities - don’t have access to federal reimbursement.

Not because they’ve failed, but because the policy design creates barriers they can’t surmount.

Local leaders, frontline staff, and families continue using them because they see the impact, but that rarely counts as evidence.

For example, Parent Partner programs, like those in California and Iowa, pair families with trained advocates who’ve navigated the child welfare system themselves.

Family Group Decision Making, used widely across the country, brings relatives together to plan for children’s safety.

Both approaches are trusted by families and frontline staff.

But because they resist standardization and haven’t cleared formal evidence bars, they often remain unfunded or underfunded.

Programs are left in limbo: effective enough to be trusted, but not funded. Why?

Because clearing the evaluation bar takes more than proof: it takes capital, consultants, and connections.

Who Decides What Counts?

Rigorous evaluation tends to reward interventions that are clinical and controllable, those that can be tested, scaled, and replicated.

But the very things that make a program effective in practice - cultural grounding, relational trust, local adaptation - rarely make it into the evidence base.

Peer-support programs run by formerly incarcerated people, for example, are excluded from many funding streams because their effectiveness rests on shared lived experience, not standardized protocols.

Tribally anchored kinship networks that have preserved child safety for generations are often excluded in favor of non-native models that meet Western data standards.

Even when funding is available, many community practices resist replication and standardization, not because they’re flawed, but because they’re designed for context.

What works in one community may need to look different in another.

Policymakers, in seeking accountability and bipartisan support, often turn to evidence-based requirements as a safeguard against waste and harm.

The rising risk is that this safeguard has become a bottleneck.

By restricting funding to models that meet narrow evaluation criteria, policy can exclude many community solutions by default.

This also creates a reinforcing feedback loop; programs that clear formal evaluation thresholds get more funding, scale, and institutional ties, while those that don’t face a relative reduction in access to those structural supports

Diluted in the Name of Fidelity

Even programs that do make it through the evidence gauntlet often emerge changed.

Take Nurse-Family Partnership: what began as a grassroots home-visiting program evolved into a nationally recognized, evidence-based model.

But in the process of scaling and securing policy investment, it also had to become more repeatable and tightly controlled.

This understandable shift, necessary to meet fidelity and evaluation demands, also limits the very local adaptation that made it resonate in the first place.

This isn’t a critique of NFP, but a recognition of the system design that makes rigidity the price of recognition.

To become legible to policymakers, programs often have to prioritize standardization over cultural specificity, and compliance over connection.

By insisting upon that legibility, a paradoxical outcome ensues; what makes a program scalable can also make it less responsive to the communities it aims to serve.

We Need More Than One Way to Know Something Works

Rigor matters, and it isn’t limited to formal evaluations that meet narrow academic standards.

When we confuse validation with value, we end up outsourcing our trust - waiting for institutions to green light practices that communities already see working.

Families go without support while systems wait for statistical proof.

This isn’t about “doing what feels right.”

It’s about recognizing that effectiveness can be measured in more than one way, and that insisting on one standard delays support, distorts impact, and discredits what communities already know.

Participatory action research, culturally responsive evaluation, and community-based participatory research (CPBR) all demonstrate how to marry rigor with relevance.

For example, the Indigenous Evaluation Framework developed by the American Indian Higher Education Consortium (AIHEC) prioritizes tribal values and local definitions of success while maintaining strong methodological integrity.

Similarly, REDCap projects that are co-designed with communities have helped health and human services agencies center equity in data collection without sacrificing analytical power.

The Bottom Line

Evidence was meant to open doors, not close them.

When it becomes a gatekeeper rather than a guide, we risk losing sight of what actually works for families and communities.

It also raises an uncomfortable question for leaders: what effective approaches are we potentially under-leveraging whose impact is real, but not legible to our metrics?

Kimberly Martin is a policy strategist bringing legal insight and lived experience to the child welfare conversation.

—

That’s it for this week!